The full dataset viewer is not available (click to read why). Only showing a preview of the rows.

Error code: TooBigContentError

Need help to make the dataset viewer work? Make sure to review how to configure the dataset viewer, and open a discussion for direct support.

image image |

|---|

TACIT Benchmark v0.1.0

Transformation-Aware Capturing of Implicit Thought

A programmatic visual reasoning benchmark for evaluating generative and discriminative capabilities of multimodal models across 10 tasks and 6 reasoning domains.

Author: Daniel Nobrega Medeiros | arXiv paper | GitHub

Overview

TACIT presents visual puzzles that require genuine spatial, logical, and structural reasoning — not pattern matching on text. Each puzzle is generated programmatically with deterministic seeding, ensuring full reproducibility. Evaluation is programmatic (no LLM-as-judge): solutions are verified through computer vision algorithms (pixel sampling, SSIM, BFS path detection, color counting).

Key Features

- 6,000 puzzles across 10 tasks and 3 difficulty levels

- Dual-track evaluation: generative (produce a solution image) and discriminative (select from candidates)

- Multi-resolution: every puzzle rendered at 512px, 1024px, and 2048px

- Deterministic: seeded generation (seed=42) for exact reproducibility

- Programmatic verification: CV-based solution checking, no subjective evaluation

Task Examples

All examples below show medium difficulty puzzles at 512px resolution.

01 — Multi-layer Mazes

Navigate through multiple maze layers connected by portals (colored dots).

02 — Raven's Progressive Matrices

Identify the missing panel in a 3×3 matrix governed by transformation rules.

03 — Cellular Automata Forward Prediction

Given a rule and initial state, predict the next state of a cellular automaton.

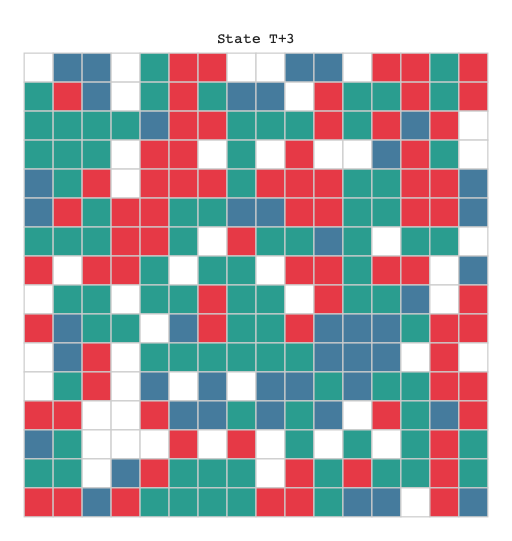

04 — Cellular Automata Inverse Inference

Given an initial and final state, identify which rule was applied.

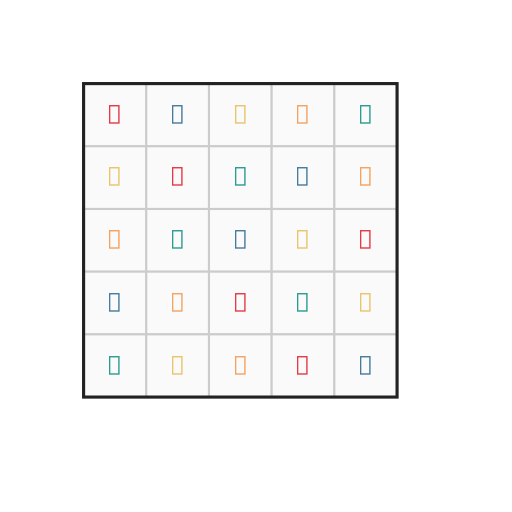

05 — Visual Logic Grids

Complete a constraint-satisfaction grid using visual clues.

06 — Planar Graph k-Coloring

Color graph nodes so no adjacent nodes share the same color.

07 — Graph Isomorphism Detection

Determine whether two graphs have the same structure despite different layouts.

08 — Unknot Detection

Determine whether a knot diagram can be untangled into a simple loop.

09 — Orthographic Projection Identification

Match a 3D object to its correct orthographic projection views.

10 — Isometric Reconstruction

Reconstruct a 3D isometric view from orthographic projections.

Tasks

| # | Task | Domain | Easy | Medium | Hard |

|---|---|---|---|---|---|

| 01 | Multi-layer Mazes | Spatial Reasoning | 8×8, 1 layer | 16×16, 2 layers, 2 portals | 32×32, 3 layers, 5 portals |

| 02 | Raven's Progressive Matrices | Abstract Reasoning | 1 rule | 2 rules | 3 rules, compositional |

| 03 | Cellular Automata Forward | Causal Reasoning | 8×8, 1 step | 16×16, 3 steps | 32×32, 5 steps |

| 04 | Cellular Automata Inverse | Causal Reasoning | 8×8, 4 rules | 16×16, 8 rules | 32×32, 16 rules |

| 05 | Visual Logic Grids | Logical Reasoning | 4×4, 6 constraints | 5×5, 10 constraints | 6×6, 16 constraints |

| 06 | Planar Graph k-Coloring | Graph Theory | 6 nodes, k=4 | 12 nodes, k=4 | 20 nodes, k=3 |

| 07 | Graph Isomorphism | Graph Theory | 5 nodes | 8 nodes | 12 nodes |

| 08 | Unknot Detection | Topology | 3 crossings | 6 crossings | 10 crossings |

| 09 | Orthographic Projection | Spatial Reasoning | 6 faces | 10 faces, 1 concavity | 16 faces, 3 concavities |

| 10 | Isometric Reconstruction | Spatial Reasoning | 6 faces | 10 faces, 1 ambiguity | 16 faces, 2 ambiguities |

Each task has 200 puzzles per difficulty level (easy / medium / hard) = 600 per task, 6,000 total.

Evaluation Tracks

Track 1 — Generative

The model receives a puzzle image and must produce a solution image (e.g., a solved maze, colored graph, completed matrix). Verification is fully programmatic using computer vision:

| Task | Verification Method |

|---|---|

| Maze | BFS path detection on rendered solution |

| Raven | SSIM comparison (threshold 0.997) |

| CA Forward / Inverse | Pixel sampling of cell states |

| Logic Grid | Pixel sampling of grid cells |

| Graph Coloring | Occlusion-aware node color sampling |

| Graph Isomorphism | Color counting + structural validation |

| Unknot | Color region counting |

| Ortho Projection | Pixel sampling of projection views |

| Iso Reconstruction | SSIM comparison (threshold 0.99999) |

Track 2 — Discriminative

The model receives a puzzle image plus 4 distractor images and 1 correct solution, and must identify the correct answer. This is a 5-way multiple-choice visual task.

Dataset Structure

snapshot/

├── metadata.json # Generation config and parameters

├── README.md # This file

├── task_01_maze/

│ ├── task_info.json # Task parameters

│ ├── easy/

│ │ ├── 512/ # 512px resolution

│ │ │ ├── puzzle_0000.png

│ │ │ ├── solution_0000.png

│ │ │ ├── distractors_0000/

│ │ │ │ ├── distractor_00.png

│ │ │ │ ├── distractor_01.png

│ │ │ │ ├── distractor_02.png

│ │ │ │ └── distractor_03.png

│ │ │ ├── puzzle_0001.png

│ │ │ ├── solution_0001.png

│ │ │ ├── distractors_0001/

│ │ │ │ └── ...

│ │ │ └── ... (200 puzzles)

│ │ ├── 1024/ # 1024px resolution

│ │ │ └── ... (same structure)

│ │ └── 2048/ # 2048px resolution

│ │ └── ... (same structure)

│ ├── medium/

│ │ └── ... (same structure)

│ └── hard/

│ └── ... (same structure)

├── task_02_raven/

│ └── ...

└── ... (10 tasks total)

File Naming Convention

puzzle_NNNN.png— the input puzzle imagesolution_NNNN.png— the ground-truth solution (Track 1 target)distractors_NNNN/distractor_0X.png— 4 wrong answers (Track 2 candidates)

Statistics

| Metric | Value |

|---|---|

| Total puzzles | 6,000 |

| Total PNG files | 108,008 |

| Resolutions | 512, 1024, 2048 px |

| Difficulties | easy, medium, hard |

| Distractors per puzzle | 4 |

| Dataset size | ~3.9 GB |

| Generation seed | 42 |

Usage

Loading with Hugging Face

from datasets import load_dataset

# Load full dataset

ds = load_dataset("tylerxdurden/TACIT-benchmark")

# Or download specific files

from huggingface_hub import hf_hub_download

puzzle = hf_hub_download(

repo_id="tylerxdurden/TACIT-benchmark",

filename="task_01_maze/easy/1024/puzzle_0000.png",

repo_type="dataset",

)

Using the Evaluation Harness

from tacit.registry import GENERATORS

# Regenerate a specific puzzle (deterministic)

gen = GENERATORS["maze"]

puzzle = gen.generate(seed=42, difficulty="easy", index=0)

# Verify a candidate solution (Track 1)

is_correct = gen.verify(puzzle, candidate_png=model_output_bytes)

See the GitHub repository for full evaluation documentation.

Reasoning Domains

The 10 tasks span 6 reasoning domains, chosen to probe different aspects of visual cognition:

- Spatial Reasoning — Mazes, orthographic projection, isometric reconstruction

- Abstract Reasoning — Raven's progressive matrices

- Causal Reasoning — Cellular automata (forward prediction and inverse inference)

- Logical Reasoning — Visual logic grids

- Graph Theory — Graph coloring, graph isomorphism

- Topology — Unknot detection

Citation

@misc{medeiros_2026,

author = {Daniel Nobrega Medeiros},

title = {TACIT-benchmark},

year = 2026,

url = {https://huggingface.co/datasets/tylerxdurden/TACIT-benchmark},

doi = {10.57967/hf/7904},

publisher = {Hugging Face}

}

License

Apache 2.0

- Downloads last month

- 87